Vision-Based Robotic Pinball

This project explored whether reinforcement learning and computer vision techniques, commonly applied in virtual environments, could be extended to a real-world robotic system by constructing an autonomous pinball machine. The work combined physical hardware actuation, real-time vision pipelines, and deep reinforcement learning agents trained in simulation to investigate how perception, control, and learning interact in a high-speed, partially observable environment.

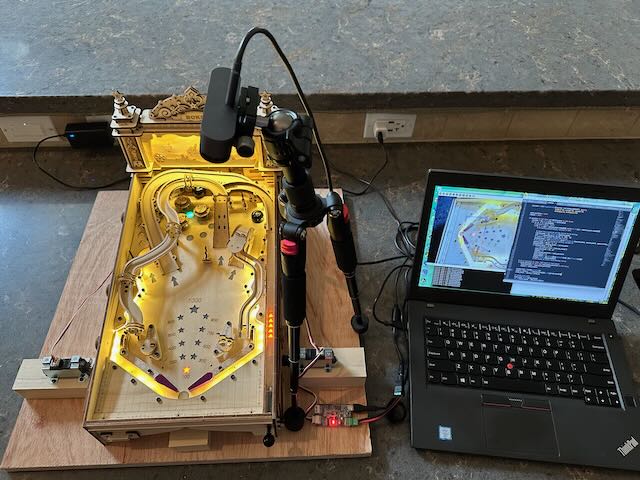

A tabletop pinball platform was instrumented with linear actuators to control the flippers and a high-frame-rate camera to observe game-play. In parallel, a virtual pinball environment based on OpenAI’s Atari Learning Environment was used to train a Deep Q-Network agent operating solely on pixel-level visual input, refined action spaces, and custom reward shaping to approximate real machine behavior.

The project surfaced core challenges in deploying learning-based systems in physical domains. Significant effort was devoted to ball detection and tracking using contour analysis, Hough transforms, background subtraction, and Kalman filtering. The work revealed the sensitivity of vision systems to reflections, lighting, vibration, and sensor noise. While a modified ball improved detection performance, full closed-loop learning on the physical platform remained constrained by perception reliability within the course time frame.

In simulation, extended training over millions of environment steps demonstrated partial skill acquisition, such as reliable ball launching and occasional high-scoring strategies, but also exposed difficulties caused by sparse rewards, hidden environmental dynamics, and delayed feedback. These results emphasized the limits of purely visual state representations in complex control tasks and motivated future work in hybrid perception models and richer learning signals.

Overall, the project functioned as a systems-level investigation into AI-driven robotics, highlighting the integration of sensing, actuation, real-time software, and learning algorithms under real-world constraints. It reflects an engineering approach grounded in experimentation, instrumentation, and iteration across both physical and simulated platforms.

Motivation

This project was motivated by a simple but technically rich question: can a robot learn to play a real-world pinball machine? While reinforcement learning has been widely explored in virtual environments and video games, far less work has been done in transferring those techniques into physical systems operating under strict timing, sensing, and actuation constraints. Pinball, while being immediately intuitive to human observers, provides an especially challenging testbed for computer robotic system. Challenges include high-speed dynamics, partial observability, reflective surfaces, and millisecond-scale control loops.

The project originated in Arizona State University’s CSE 598: Advances in Robot Learning course, where the goal was to apply modern learning and control methods to a real or simulated system within a single semester. My project partner and I pursued both sides of the problem: building a physical robotic pinball platform driven by computer vision and real-time control, and experimenting with reinforcement learning using a virtual pinball environment based on the Atari 2600 through OpenAI Gym.

By tackling perception, prediction, and actuation simultaneously, this project served as an end-to-end exploration of AI systems operating in the physical world – bridging machine learning, embedded control, and systems integration under real-world constraints. The work reflects my broader interest in building compute-driven robotic systems where algorithmic performance is inseparable from hardware, latency budgets, and physical interfaces.

System Architecture

The robotic pinball platform was designed as a closed-loop perception and control system operating under real-time constraints. A USB camera mounted above the playfield captures video of the game surface, which is processed by a computer vision pipeline to detect and track the pinball in each frame. The detected ball position is filtered using a Kalman estimator to produce a smoother estimate of position and velocity suitable for control decisions.

A multi-threaded control program evaluates the estimated ball trajectory and determines when to actuate the flippers. Control commands are transmitted to linear actuators that physically trigger the pinball flippers. Because the ball can travel across the playfield in fractions of a second, the entire perception-to-actuation loop must execute with minimal latency.

The system operates continuously as a feedback loop:

Camera → Vision Processing → State Estimation → Control Logic → Flipper Actuators → Physical Game State → Camera

In parallel with the physical system, a reinforcement learning environment based on the Atari pinball game was used to explore algorithmic strategies for autonomous gameplay. This simulation environment allowed experimentation with learning-based agents while the hardware platform focused on perception, tracking, and real-time control challenges.

Key Technologies

Computer Vision

- OpenCV-based detection pipeline

- Ball detection using contour analysis and geometric filtering

- Background subtraction and image preprocessing

- Robustness challenges from reflections, lighting variation, and occlusions

State Estimation

- Kalman filtering for smoothing noisy position measurements

- Velocity estimation to predict ball trajectory

- Filtering required for reliable control decisions under sensor noise

Control System

- Multi-threaded Python control software

- Real-time event detection for flipper actuation

- Timing-sensitive control loop to meet millisecond-scale deadlines

Hardware Platform

- Tabletop pinball machine instrumented for experimentation

- Linear actuators (MightyZap) used to trigger flippers

- USB camera mounted above playfield for vision feedback

Reinforcement Learning Environment

- Simulation used to explore learning approaches before physical deployment

- Atari 2600 pinball environment using OpenAI Gym / Gymnasium

- Deep Q-Network experiments for autonomous gameplay strategies